Key Takeaways

- Erasure is happening now, and nowhere is it more consequential than in artificial intelligence—the technology shaping who gets hired, who gets credit, who gets surveilled.

- The women who built the foundations of modern computing were erased. The women now doing critical AI safety work are being erased in real time.

- Ada Lovelace wrote the first computer algorithm in 1843. Grace Hopper invented the compiler. The 'Human Computers' made NASA's moon landing possible.

- Dr. Joy Buolamwini, Dr. Timnit Gebru, Dr. Safiya Umoja Noble, and Dr. Ruha Benjamin are leading today's fight for algorithmic justice.

A Note Before We Begin

I'm a Black, queer, first-generation Togolese immigrant, living with an invisible chronic illness, who has spent 25 years building technology that often doesn't recognize people like me. I know what it's like to be in a room where the systems were built without you in mind. To have your voice go unrecognized by the assistant, your name rejected by the form, your face flagged as anomalous by the software. To watch your ideas credited to someone else—and to understand, viscerally, that this is not just your experience, but a pattern. It has a history, and that history lives in the code.

Every February, we tell stories about Black pioneers who were erased from history—mathematicians, scientists, engineers whose contributions were taken without credit, whose names were scrubbed from the record, whose work was rebuilt by someone else and celebrated as new. Every March, we do something similar for women.

And every year, across both cases and two months, we treat this erasure like a relic from the past. As if it is something that happened to other people, in another era, before we knew better. It is not.

Erasure is happening now, and nowhere is it more consequential than in artificial intelligence—the technology that is already shaping who gets hired, who gets credit, who gets surveilled, who gets approved for loans, who gets flagged as a risk, and more.

The women who built the foundations of modern computing were erased. The women who are now doing the most important work on AI safety and algorithmic justice are being erased in real time—fired, discredited, underfunded, and uncited while the institutions that ignored them race to catch up with what they proved years ago. This Women's History Month, we are telling the full story—our stories.

Part One: The Women Who Built the Foundation

Ada Lovelace: The First Programmer History Keeps Forgetting

In 1843, a 27-year-old mathematician named Augusta Ada King—Countess of Lovelace, known to history as Ada Lovelace—published a translation of an Italian mathematician's notes on Charles Babbage's proposed Analytical Engine. Her translation was more than twice as long as the original. The additions were hers.

Those additions included what is now recognized as the first algorithm intended to be processed by a machine—a method for computing Bernoulli numbers. They also included a conceptual leap that Babbage himself had not made: the recognition that the Analytical Engine could be used to process not just numbers but any symbolic operation that could be expressed logically. Music. Language. Logic itself.

Lovelace described, in 1843, what we now call general-purpose computing. She saw it before the hardware existed to run it. Her contributions were minimized in her lifetime, attributed to Babbage in decades of subsequent history, and only fully credited in the second half of the 20th century—and then only because a computing language developed by the U.S. Department of Defense in the 1980s was named after her, forcing historians to revisit what she had actually done.

A programming language is named after her, but she is not, for most people, the name that comes to mind when they think about who invented computing. That name is Charles Babbage.

Grace Hopper: The Woman Who Made Computers Speak English

In 1952, Rear Admiral Grace Murray Hopper created the first compiler—a program that translates human-readable code into machine language, making programming accessible to people who weren't trained in the binary operations of hardware.

Before Hopper, programming required intimate knowledge of the specific architecture of each machine. Hopper's compiler meant that a programmer could write in something closer to natural language and have the machine do the translation. This is the fundamental architecture of virtually every programming language that exists today.

Hopper also coined the term 'debugging' after literally removing a moth from a relay in a Harvard Mark II computer. She championed COBOL—Common Business-Oriented Language—one of the oldest and most widely used programming languages in history, still running core systems at banks, governments, and financial institutions around the world.

She was a Rear Admiral. She was a pioneering computer scientist. She was one of the most consequential engineers of the 20th century. She is most commonly known, in popular culture, for the moth.

The Human Computers: Katherine Johnson, Dorothy Vaughan, Mary Jackson

Before NASA had electronic computers, they had human ones—mathematicians who calculated the trajectories, orbital mechanics, and re-entry angles that put satellites and astronauts into space and brought them home. Many of those human computers were Black women. Katherine Johnson. Dorothy Vaughan. Mary Jackson. Christine Darden. Their work was classified, segregated, and systematically uncredited for decades.

The 2016 film Hidden Figures brought their names to mainstream audiences. It took a Hollywood movie to accomplish what decades of history books had not.

What most people don't know is that Dorothy Vaughan didn't just calculate. When she recognized that electronic computers were coming and would replace human computers, she learned FORTRAN—IBM's programming language—on her own, from a book she borrowed from the segregated public library. She then taught it to her entire team, all of whom were Black women. She managed the transition from human to electronic computing for the West Area Computing section at Langley—training her team for jobs the institution hadn't even created yet. Dorothy Vaughan was, functionally, the first IT manager at NASA. She did not receive that title.

Margaret Hamilton: The Woman Who Put the Code in Apollo

In 1969, when the Apollo 11 lunar module was descending toward the moon and an alarm began firing—signaling a computer overload—the mission continued rather than aborting. It continued because Margaret Hamilton and her team at MIT had anticipated exactly that failure mode and written error-prioritization routines into the software that allowed the most critical tasks to take precedence.

Hamilton is credited with coining the term 'software engineering'—she used it deliberately to argue that software development deserved the same rigor, discipline, and respect as hardware engineering. It took decades for the field to catch up to that framing.

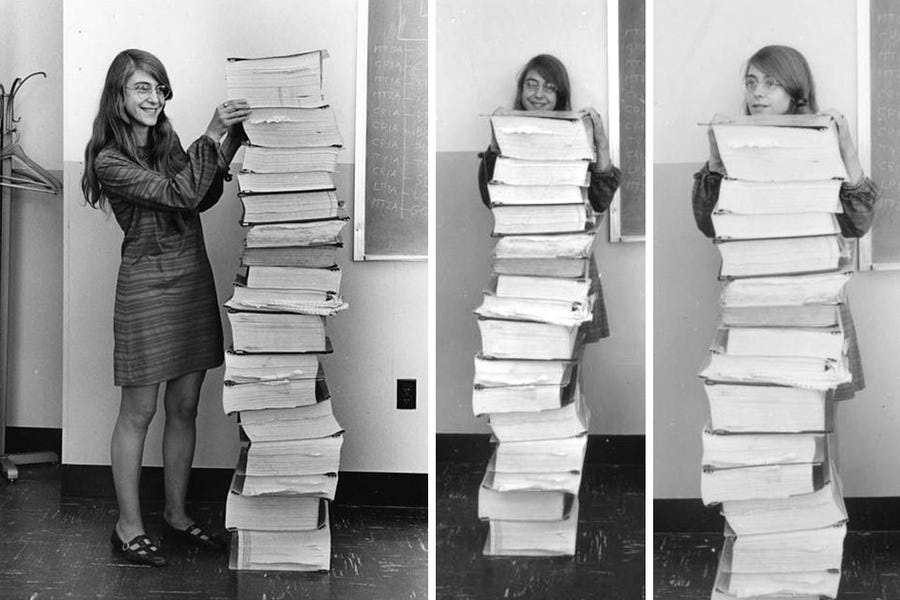

A photograph of Hamilton standing next to a stack of printed code taller than she is has become iconic. Her name is more widely known than it was 20 years ago. But ask most people who wrote the software that landed humans on the moon, and the name they reach for first is not Margaret Hamilton.

Part Two: The Pattern

These are not isolated stories of individual women who happened to be overlooked. They are examples of a systematic pattern. The pattern operates like this:

Women—and disproportionately, women of color—do foundational work. They identify problems, develop solutions, build frameworks, write the code, do the math, establish the concepts. Their contributions are minimized at the time: attributed to male colleagues, described as support work, classified, or simply not mentioned.

Years or decades later, a partial restoration happens—a film, a programming language named in someone's honor, a belated award. The restoration is real but incomplete. The names become known without the full scope of the work being understood. Meanwhile, the men who were in proximity to the work receive the primary credit, the primary funding, the primary positions of influence in the institutions that were built on that work.

This pattern is not ancient history. It is the operating system of the technology industry today.

Part Three: The Women Doing the Work Right Now—and What's Happening to Them

Dr. Joy Buolamwini: The Coded Gaze

In 2016, Dr. Joy Buolamwini was a researcher at the MIT Media Lab when she discovered that facial recognition software couldn't detect her face—until she put on a white mask.

Her subsequent research—the landmark 'Gender Shades' study, published 2018—documented that commercial facial recognition systems from IBM, Microsoft, and Face++ had error rates of up to 34.7% for darker-skinned women, compared to under 1% for lighter-skinned men. She coined the term 'the coded gaze' to describe the embedded prejudice in AI systems that reflect the priorities of those who built them.

When her work specifically critiqued Amazon's Rekognition software, an Amazon executive publicly attempted to discredit her methodology—while declining to share Amazon's own internal accuracy data.

Buolamwini went on to found the Algorithmic Justice League. She published Unmasking AI in 2023. She testified before Congress. She received a MacArthur Fellowship. She did all of this while fighting, continuously, the institutional reflex to minimize, dismiss, and discredit research that makes powerful institutions uncomfortable.

Dr. Timnit Gebru: The Cost of Being Right

Dr. Timnit Gebru was co-lead of Google's Ethical AI team when she was fired in December 2020. The firing came after she submitted a paper—co-authored with colleagues including Emily Bender, Angelina McMillan-Major, and Margaret Mitchell—raising concerns about the risks of large language models.

The paper, 'On the Dangers of Stochastic Parrots,' documented environmental costs, training data bias, and risks of deploying AI systems whose behavior could not be fully understood or predicted. Google management requested she remove her name from the paper. She refused. She was fired.

This was not a simple employment dispute. Gebru had co-founded Black in AI, an organization building Black representation in AI research. Her work on bias had shaped how the field thought about algorithmic fairness. She was, at the time of her firing, one of the most prominent AI ethics researchers in the world.

Over 2,600 Google employees signed a letter of protest. Margaret Mitchell, Gebru's co-lead who had co-authored the same paper, would also be fired months later. Gebru founded the Distributed AI Research Institute—an independent organization explicitly structured to be free from corporate funding and therefore free to do research that corporate interests find inconvenient.

'What happened to me is not new,' she told the New York Times. 'It's the same pattern that happens to Black women everywhere.' She was right. And the pattern she named—Black women doing the most rigorous work, at the highest personal cost, on the problems that matter most—is the pattern this Women's History Month is about.

Dr. Safiya Umoja Noble, Dr. Ruha Benjamin, Dr. Rediet Abebe

The women above are three of a larger cohort whose work has defined what responsible AI research looks like.

Dr. Safiya Umoja Noble's Algorithms of Oppression (2018) documented how Google Search results perpetuated racist and sexist stereotypes—returning pornographic results for searches involving Black girls, while returning neutral or aspirational results for equivalent searches involving white girls. Her work forced a reckoning with how search engine results are not neutral surfaces but editorial choices that reflect the values of those who built them.

Dr. Ruha Benjamin's Race After Technology (2019) gave the field the term 'the New Jim Code'—the way technically neutral systems can encode and perpetuate racial hierarchy. She argued that the seductive promise of objectivity in algorithmic systems is itself a mechanism for laundering discrimination: by encoding bias in math, institutions can claim neutrality while producing the same discriminatory outcomes as explicitly biased policies.

Dr. Rediet Abebe, co-founder of Black in AI and a researcher whose work bridges computer science and economics, has pushed the field toward understanding that questions of algorithmic fairness cannot be separated from questions of structural inequality. You cannot mathematically define fairness without making choices about whose interests to prioritize—and those choices are not technical. They are political.

These names are known within the field. They are not, for most people outside it, the names that come to mind when the topic of AI comes up. The names that come to mind are Sam Altman. Elon Musk. Geoffrey Hinton. Yann LeCun. This is the erasure in real time.

Part Four: Why It Matters for Your Organization

I want to be specific about why this history is relevant to every organization using AI right now—because I have found, in my work, that the history is interesting to people but the organizational implications are where the real resistance lives.

The same dynamics that erased women's contributions from the history of computing—the same dynamics that fired Dr. Timnit Gebru and discredited Dr. Joy Buolamwini—are the dynamics operating inside your organization's AI governance right now.

If women, and especially women of color, are raising concerns about AI systems in your organization—about bias, about fairness, about who the system is working for and who it isn't—and those concerns are being minimized, dismissed, attributed to oversensitivity, or managed rather than investigated: you have a governance failure. Not a diversity problem. A governance failure.

The people with lived experience of being failed by systems are the people most likely to identify when a system is failing. Their concerns are not political positions to be balanced against business interests. They are technical information—about system performance, about error rates, about the populations the system is not working for.

Organizations that treat equity concerns as governance information catch bias early, when it is correctable, before it becomes a regulatory violation or a public scandal. Organizations that treat equity concerns as political noise catch bias late—in litigation, in a ProPublica investigation, in an EU AI Act enforcement action.

The history of women being written out of AI is not just a history lesson. It is a live diagnosis of an organizational failure pattern that most companies haven't yet recognized in themselves.

Part Five: What Restoration Actually Looks Like

Restoration is not a film. It is not a programming language named after someone who died a century ago. It is not a Women's History Month social media post. Restoration is:

Citation. When you use frameworks developed by women researchers, cite them—in your reports, your presentations, your client deliverables. 'The coded gaze' is Dr. Joy Buolamwini's term. 'The New Jim Code' is Dr. Ruha Benjamin's framework. 'Algorithms of oppression' is Dr. Safiya Umoja Noble's analysis. Use the terms with their origins attached.

Compensation. Women-founded research organizations and advocacy groups doing AI accountability work—the Algorithmic Justice League, DAIR, Black in AI—are chronically underfunded relative to the corporate AI labs whose work they hold accountable. If your organization benefits from their research, fund them.

Power. Diversity in AI teams is not representation. Representation is a number. Power is influence over decisions—over which systems get built, how they get tested, what happens when problems are found, who is accountable when systems fail. If the women in your organization don't have that power, the diversity number is decorative.

Safety. The most important thing your organization can do to make equity concerns visible is to make naming them safe. If people who identify AI problems are celebrated rather than managed, you will learn about problems when they are small. If they are managed, you will learn about them when they are large. This is not a values statement. It is a risk management strategy.

Discussion Questions For Your Organization

Whose names do your employees associate with the history of computing and AI? What does the list look like by gender and race? When women in your organization raise concerns about AI systems—about fairness, bias, or impact on specific groups—what is the standard organizational response?

Do you cite the researchers whose frameworks you use? Is the citation practice consistent regardless of the researcher's gender and race? What is the ratio of women to men in roles with actual decision-making power over your AI systems—not just in advisory or ethics roles, but in product, engineering, and governance leadership?

What would it take for your organization to treat the Algorithmic Justice League's research with the same weight it gives to research from MIT's Computer Science department?

Resources

Algorithmic Justice League — ajl.org — Founded by Dr. Joy Buolamwini; resources, research, and advocacy on algorithmic accountability.

Unmasking AI — Dr. Joy Buolamwini (2023)

Algorithms of Oppression — Dr. Safiya Umoja Noble (2018)

Race After Technology — Dr. Ruha Benjamin (2019)

DAIR Institute — dair-institute.org — Dr. Timnit Gebru's independent AI research organization.

Black in AI — blackinai.org

Hidden Figures — Margot Lee Shetterly (2016) — The book, not just the film.

'On the Dangers of Stochastic Parrots' — Bender, Gebru, McMillan-Major, Mitchell (2021) — The paper that cost two researchers their jobs at Google.

Dr. Dédé Tetsubayashi is a Black, queer, first-generation Togolese immigrant and transracial adoptee living with sickle cell disease. She is a global advisor on AI governance, disability innovation, and inclusive technology strategy—with 25 years at the intersection of technology, policy, and liberation. Her lived experience of being coded out informs everything she builds.