Key Takeaways

- Digital blackface is not a metaphor for minstrelsy—it is minstrelsy in evolved form, using Black bodies as emotional shorthand without consequence.

- GIF platforms have structurally encoded Black expressiveness as the default language of heightened digital emotion, creating extractive feedback loops.

- The “just a GIF” defense ignores the aggregate harm of billions of instances reducing Black people to emotional costumes.

- AI-generated content is scaling digital blackface at unprecedented speed, reproducing racial stereotypes without individual accountability.

- Platform accountability requires compensation structures, equitable content moderation, algorithmic transparency, and investment in Black creators.

My original piece on digital blackface introduced the concept. The response I received was significant—from people who recognized themselves in the behavior, from Black people who finally had language for something that had always felt wrong, and from people who pushed back, defensively, insisting that a GIF is just a GIF. This deep dive is for all of you.

Part One: Understanding the History

Minstrelsy Is Not Ancient History

Most people, when they think of blackface, think of 19th century stage performances—Al Jolson in white gloves and burnt cork, a grotesque tradition safely confined to history textbooks. This framing is convenient. It is also false.

Blackface minstrelsy didn’t end with the vaudeville era. It evolved. In its original form, minstrelsy was a systematic dehumanization project. White performers “blacked up” to portray Black people as lazy, childlike, hypersexual, comedically exaggerated—a catalog of stereotypes designed to justify the hierarchies of slavery and, later, Jim Crow. The audience didn’t just laugh; they learned. They were taught what “Blackness” meant, and they were taught it through caricature.

Digital blackface is not a metaphor for something like minstrelsy. It is minstrelsy. The form has changed. The function—using Black performance for white entertainment and emotional expression, without consequence—has not.

What Lauren Michele Jackson’s Foundational Analysis Taught Us

In 2017, writer and scholar Lauren Michele Jackson published “We Need to Talk About Digital Blackface in Reaction GIFs” in Teen Vogue—the article that gave the concept mainstream visibility and the language many people use today.

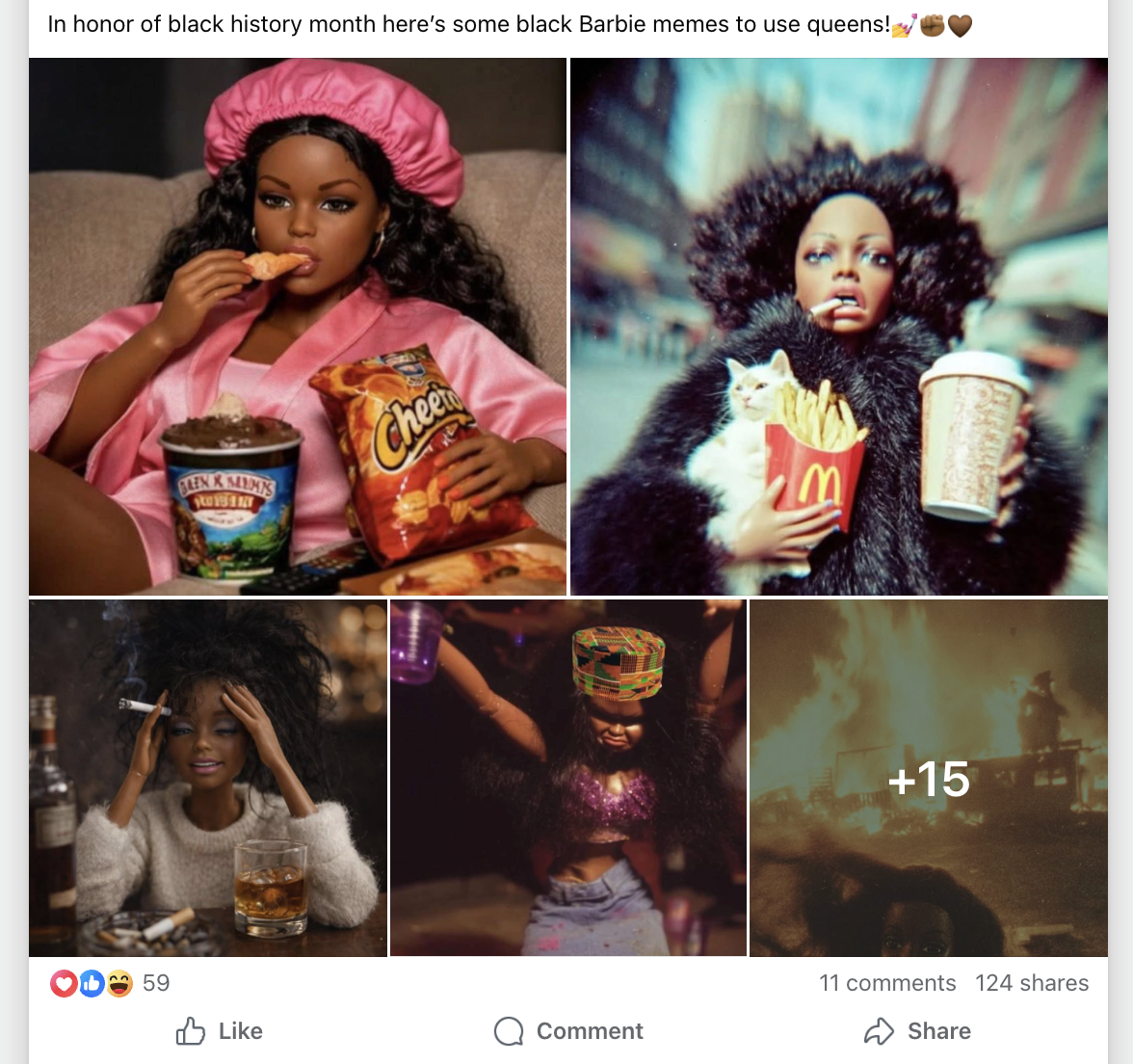

Jackson’s argument was precise: digital blackface describes various types of minstrel performance in cyberspace. When non-Black people use GIFs and memes featuring Black people—particularly to express heightened emotions, “sassy” reactions, exaggerated shock, dramatic expressiveness—they are borrowing Black bodies as emotional shorthand.

The answer is rooted in a very old idea: that Black expressiveness is more authentic, more raw, more emotionally real than white expressiveness. This idea—that Black people have a special access to emotion—is not a compliment. It is a stereotype. And like all stereotypes, it reduces people to a single dimension while denying their full humanity.

Part Two: The Mechanics of How It Works

The GIF Economy and Who Controls It

When you search for a reaction GIF on Giphy, Tenor, or Twitter’s built-in GIF search, you’re interacting with a curated database. Someone made choices about what appears first when you search “excited” or “shocked” or “yaaas.” Someone decided what the default emotional vocabulary of digital communication looks like.

The result is a GIF ecosystem in which Black expressiveness has been systematically encoded as the default language of heightened digital emotion. This is not individual users making individual choices, but a structural system in which major technology platforms have built Black emotion into the emotional infrastructure of digital communication—without the consent or compensation of the people whose images, performances, and emotional labor built that infrastructure.

The Extractive Loop

When a Black creator’s video goes viral and their most expressive moment becomes a GIF, they typically receive no compensation, no ongoing credit as that GIF circulates millions of times, and no control over how their image is used. Their momentary expression becomes public property—emotional infrastructure for everyone else’s communication.

In music, it was Black artists’ innovations in blues, jazz, rock and roll, hip-hop—appropriated, sanitized, and sold by white artists to white audiences. In fashion, it was Black aesthetics repackaged as “trends” when adopted by non-Black people. In digital culture, it is Black emotional expression repurposed as freely available communication tools. The form has changed. The extractive logic has not.

The “But It’s Just a GIF” Defense

“It’s just a GIF.” The harmlessness of individual actions is irrelevant to the harm of aggregate patterns. No individual drop of water is responsible for a flood. But if you’re standing in a flood, the philosophical innocence of individual water molecules provides no comfort.

“You’re being oversensitive.” “Oversensitive” is a word deployed to shift responsibility from those who cause harm to those who name it. When someone says “that hurts,” responding with “you’re too sensitive” is not an argument. It’s a power move.

“Intent matters.” Intent matters, but it is not the only thing that matters. A car accident caused by an inattentive driver still injures the people in the other car. If your participation in a practice causes harm—even if you didn’t intend the harm—the question isn’t resolved by pointing to your intent. It’s resolved by asking: now that I know, what will I do differently?

Part Three: Beyond GIFs — The Full Landscape

Digital Blackface in Language and Text

When non-Black people adopt AAVE (African American Vernacular English) digitally—using “chile,” “periodt,” “slay,” “no cap,” “and I oop,” “it’s giving”—without understanding their origins or maintaining any real engagement with Black culture, they are performing a version of the same dynamic.

Black people who speak AAVE face discrimination in hiring, housing, and education. Non-Black people who borrow AAVE face no such consequences. This asymmetry is the definition of extraction.

Digital Blackface in AI-Generated Content

AI image generation tools, trained predominantly on scraped internet data, have internalized the visual languages and stereotypes of the cultures that produced that data. When users prompt AI systems to generate “expressive” or “dramatic” characters, and the outputs default to racialized stereotypes—we are watching digital blackface happen at scale and at speed.

AI-generated digital blackface is not a future problem. It is happening now. And because AI-generated images circulate without the identity of a human creator attached to them, there is no individual accountable. The harm diffuses. The pattern persists.

Part Four: Platform Accountability

Black culture is, consistently, the engine of virality on social media platforms. Black creators, Black vernacular, Black aesthetics, Black humor—these drive engagement, and engagement drives advertising revenue. Platforms know this.

What Platform Accountability Would Require

- Compensation structures: For creators whose images become widely circulated GIFs and memes—particularly when used in unintended ways.

- Content moderation equity: Policies that take racial harm as seriously as copyright claims, which currently receive far more platform attention.

- Algorithmic transparency: About how content featuring Black people is distributed, promoted, and monetized compared to equivalent content.

- Investment in Black creators: Through equitable monetization, promotion, and platform governance representation—not just token creator funds.

Part Five: The Deeper Work

From Awareness to Accountability

Awareness is the first step. It is not the destination. The question isn’t whether you now understand that digital blackface exists. The question is what you do with that understanding.

For Non-Black People: Doing the Work

- Audit your GIF and meme use: Look at the images you share to express emotions. When you reach for a reaction GIF, who is in that image? What stereotype is being activated?

- Stop using AAVE you haven’t earned: Do you have genuine, ongoing relationships with Black people? Are you doing the work of understanding where the language comes from?

- Engage with the source: If you love Black culture, extend that love to the people who created it. Follow, pay for, and amplify Black creators.

- Get comfortable with discomfort: The discomfort of having done harm you didn’t know you were doing is not punishment. It is information. Let it move you toward different choices.

Where Liberation Lives

Digital blackface exists because we live in a world structured by white supremacy—a world in which Black people’s labor, creativity, expressiveness, and bodies have been treated as available resources rather than as human beings with sovereignty over their own image and expression.

Liberation in digital spaces requires what liberation requires everywhere: structural change. Platform accountability. Equitable compensation. Governance structures that include the voices of those most affected. Regulatory frameworks that treat racial harm as seriously as financial harm.

The fight for liberation has always included the fight for who gets to control their own image, their own expression, their own narrative. Digital spaces are the newest terrain in that fight. The question for each of us—in every digital interaction—is which side we’re on.

Discussion Questions for Your Community

- Before reading this article, had you encountered the concept of digital blackface? How has your understanding changed or deepened?

- Think about the last week of your digital communication. What patterns do you notice in the images and language you use to express emotion?

- How do you see the “coded gaze”—the embedded prejudices in AI systems—connecting to digital blackface?

- What accountability structures would you support on the platforms you use?

- How do you distinguish between cultural appreciation and cultural appropriation in digital spaces?